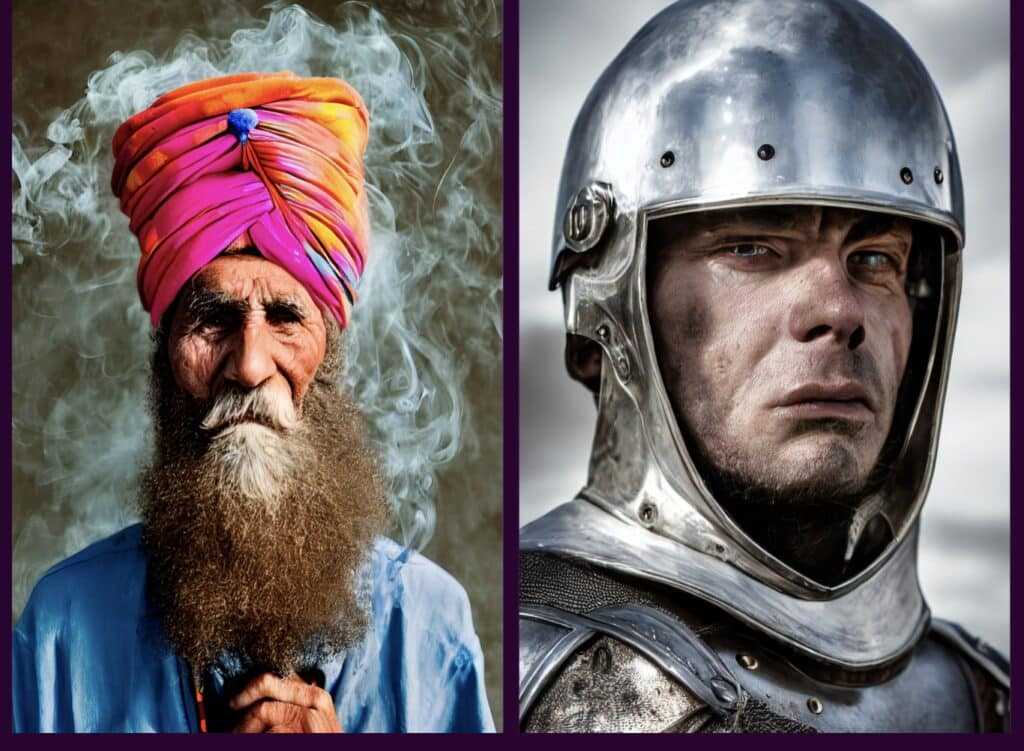

Stable Diffusion image of AI generated faces. Image courtesy Stability AI.

Stability AI recently announced the public launch of their Stability Diffusion AI image generator based on text-to-image generation that can run on consumer GPUs to generate custom art within seconds. The internet kind of freaked out. Top of people's minds: should it be legal to generate art that is derivative of copyrighted art, and will artists lose their jobs if AI can draw a custom image in 2 minutes? Also, AI needs bias not to be baked in by being fed mostly images of white people from the internet, as well as considering many other issues of image bias and representation that lead to police profiling, biased facial recognition systems, and AI generators have the potential to be used to generate fake revenge porn images, among other harmful content.

Stable Diffusion image of AI generated image of a war zone or natural disaster. Image courtesy Stability AI.

This image generator looks quite capable and impressive, based on the published images we have posted for you here, and we're sure that companies already prone to using stock images might shift in this direction for custom artwork for free. However, don't clutch your pearls just yet, because we have news for you. AI is notoriously bad at drawing certain things so far. Such as hands. Yes, this AI image generator can produce custom art that rivals Evangelion at times. What AI can't do very well yet is draw the houses in the background of the image you requested or the details of a spaceship's controls: they come out all pixelated and twisted with the roofline half off the walls or the buttons swirled into strange shapes (see the second to last image below for an example of a really great image that still doesn't make sense on close examination). Getting AI to understand certain relationships is quite tortured. Ask an AI generator like Dalle to draw the image of a connective tissue disorder? My eyes.

We asked Dalle for "Ehlers Danlos Syndrome," an inherited disorder of the connective tissue that results in fragile collagen, based on seeing an internet user posting what appeared to be art generated from the request that resembled a human ribcage and nervous system twisting like a spiral staircase. Interesting.

Dalle creates "Ehlers Danlos Syndrome," an inherited collagen disorder that the AI generator has misinterpreted as affecting the form of hands and feet. Or something.

Dalle drew distorted images of deformed hands. Or were they feet? Some images came back with bruises, others visible veins, which is probably an interpretation of some symptoms of Ehlers Danlos, which can include easy bruising or visible veins. It was hard to tell what the images were generating though, because of distortion. Maybe something that was a rash or hematoma? Anyway, AI can only associate relationships between images with detailed prompts and good input, so it depends very much on what you feed into the generator what you get out.

The new Stable Diffusion generator can use more detailed prompts and should have better relationship associations, and it was trained on a model building on the work of CompVis and Runway in their popular latent diffusion model with additional insights from the conditional diffusion models by Stable Diffusion's lead generative AI developer Katherine Crowson, Dall-E 2 by Open AI, Imagen by Google Brain. So, lots more than just Dalle.

Dalle recreates the famous William Carlos Williams poem: "So much depends on a red wheelbarrow..."

We fed the image generator a piece of the William Carlos Williams' poem about a red wheelbarrow with chickens into Dalle. It put a raw chicken cutlet in a wheelbarrow, bringing new layers of meaning to English majors everywhere to analyze. We think most artists will keep their day jobs for now. We tried again: a chicken melting into a wheelbarrow? A chicken with wheelbarrow feet.

Is Stable Diffusion that much different? It appears so, but it's not clear yet just how detailed the prompts are or how much filtering has gone into published images. Developers are being given first access to test the generator, so you tell us. What seems significantly different is that the core dataset was trained on LAION-Aesthetics, a soon-to-be-released subset of LAION 5B. LAION-Aesthetics was created with a new CLIP-based model that filtered LAION-5B based on how “beautiful” an image was, building on ratings from the alpha testers of Stable Diffusion. So there is a human layer of interpretation and feedback on image appropriateness that might train this model to be a bit more... tasteful. But that's entirely up to the testers, which raises further potential legal and ethical questions for the future uses of AI image generation.

Stable Diffusion image of AI generated fantasy art. Image courtesy Stability AI.

Details of the Stable Diffusion AI Image Generator Release

The designers of this AI generator say, "As these models were trained on image-text pairs from a broad internet scrape, the model may reproduce some societal biases and produce unsafe content, so open mitigation strategies as well as an open discussion about those biases can bring everyone to this conversation. Learn more about the model strengths and limitations in the model card."

This does raise the issue of perpetuating current problems existent in the data set available for feeding image generators. In fact, all kinds of issues could arise beyond police profiling, facial recognition technology bias, or underrepresentation.

Dermatology recently faced a reckoning for failing to show images of darker skin with rashes and other skin complaints. Historically it was assumed that lighter skin makes it easier to see rashes and scarring, so photos were used in medical textbooks of light skin only. But skin complaints appear differently on darker skin, for example a rash appearing gray on dark skin and red on light skin, and so patients of color are harmed by lack of representation in medical texts that can lead to misdiagnosis or under-treatment of medical complaints. This is one of many potential hurdles AI must overcome to create meaningful and ethical content that is usable beyond fantasy art or stock imagery. However, the new image generator also seems to have overcome many previous issues to create gorgeous images from certain prompts.

Stable Diffusion image of AI generated face. Image courtesy Stability AI.

You have to see it for yourself. The new image generator was tested by over 10,000 beta testers creating 1.7 million images a day, and includes a feature that allows you to tell it what you don't want in the output, which is still under development and could make a big difference in giving the desired results (if you even know what you're going for: the image of the chicken melting into a wheelbarrow has grown on us). If you would like to try out the new Stable Diffusion image generator, more detailed information is available at the following links:

Stability AI Stable Diffusion AI image generator access request form: [https://stability.ai/research-access-form]

You can join the dedicated community for Stable Diffusion here: [https://discord.gg/stablediffusion]

You can find the weights, model card and code here:[https://huggingface.co/CompVis/stable-diffusion]

An optimized development notebook using the HuggingFace diffusers library: [https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_diffusion.ipynb]